Every year, I spend some time architecting and writing terraform, sentinel, cloudformation, and python security controls for cloud native workloads on the major IaaS providers including Aliyun and Rackspace. This year, I decided to break apart my IaaS assumptions. Especially with AWS and Docker Enterprise focusing heavily on their security capability maturity models. I paid attention to multi-tenancy workloads. At 30,000 foot view, these are my pillars;

Trust

Segregation

Confidentiality & Privacy

Visibility & Manageability

Portability & Interoperability

Reliability & Resiliency

Identity

Compliance

24,000 foot view applying security models, patterns, and techniques for compliance-driven security as observed by Hoff;

skydiving quickly to release the parachute at 1,000 feet looks like this for any environment, including hybrid and on-premise models with corresponding layers of abstractions;

With these many projects to solve various cloud computing aspects, it quickly evolves to the challenge with R&D'ing multi-tenancy services. The major challenge is building out a friction-less and cost effective security model around the various multi-tenancy patterns. For arguments sake, let's assume this is the typical flow for a multi-tenant R&D effort

Design Time: At the design time, each pattern assumes Tenant as a logical entity. They focus on tenant aware application design that allows taking high-level design decisions to implement, measure and manipulate each tenant independently. Patterns included in this category are:

Tenant as a state

Tenant aware measurement

Tenant aware logging.

Development Time: Patterns in this category work with components of the application to incorporate various tenant management aspects. These patterns require modifying the application components. The patterns in this category are:

At least one multi-tenant component

Multi-tenant database schema

Re-entrant and tenant-specific components

Runtime: The patterns which focus on dynamic binding of components and connectors, are included in this category. They tailor the behavior of the application to meet the specific requirements of tenants at runtime. These patterns are:

Dynamic resource allocation

Dynamic architecture

An interesting challenge collapsing the data security model to the workload is handling the workloads' multi-tenancy trade offs. Engineers struggle to choose an adequate architectural style for multi-tenant software systems. Bad choices result in poor performance, low scalability, limited flexibility, insecurity assurances, and obstruct software evolution. The below chart shows an overview of the consequences of all different multi-tenancy patterns and assess the weight of the consequences for the specific situation. Based on the consequences and weights, one may select a subset of patterns to evaluate in more depth. A being Application Server. D being a datastore (typically relational database or graph database.) The second attribute refers to the isolation or not of the server, data store, and / or infrastructure - dedicated database with one database instance, dedicated database with shared database, dedicated database with one database with multiple schemas. For instance, AI DC is a shared application server and instance handling all tenant data flows connected to a single database server with tenants separated by the database’s schema. One Node.JS instance connected to a single Postgresql instance running a single database called Users with all of the customers separated by the database table’s schema. More information and below diagrams from https://citeseerx.ist.psu.edu/viewdoc/download?doi=10.1.1.398.8253&rep=rep1&type=pdf

AI, DC

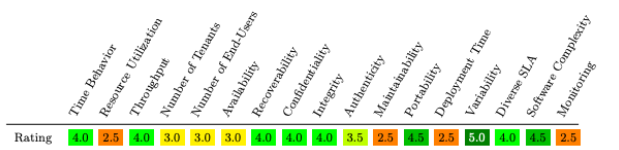

The 12 distinct patterns using 17 different criteria points leads to the following chart:

A value of 1 indicates a negative effect. A score of 5 implies a positive effect. the last column shows the σ 2 -value, indicating how much the criterion is affected by the choice for a specific muli-tenanted pattern. In the data security domain, we place significant value to those patterns which optimize for availability, integrity, confidentiality, authenticity, and software complexity. A typical pattern prioritizing those weights may look like this;

with these weights

Merging

with

results in a landscape like

By utilizing a well accepted orchestration service, service mesh, and container container approach, that allows one to truly collapse the data security model around the code itself. That is a challenge when the underlying hardware and operating systems operate in a walled garden security model involving the enclaves, kernel, userland, and similar patterns akin to:

underneath the *aaS abstractions

Sadly Asbestos never took off for running the underlying workloads for the current container services and technologies. It provides a novel labeling and isolation mechanisms to contain, via policy, inter-process communication, and system-wide information flows. Such that the formal methods prove that it isn't possible for a process to conduct an unauthorized process call

which may look akin to

The end result being "Asbestos should support efficient, unprivileged, and large-scale server applications whose application-defined users are isolated from one another by the operating system, according to application policy"

http://www.scs.stanford.edu/~dm/home/papers/efstathopoulos:asbestos.pdf

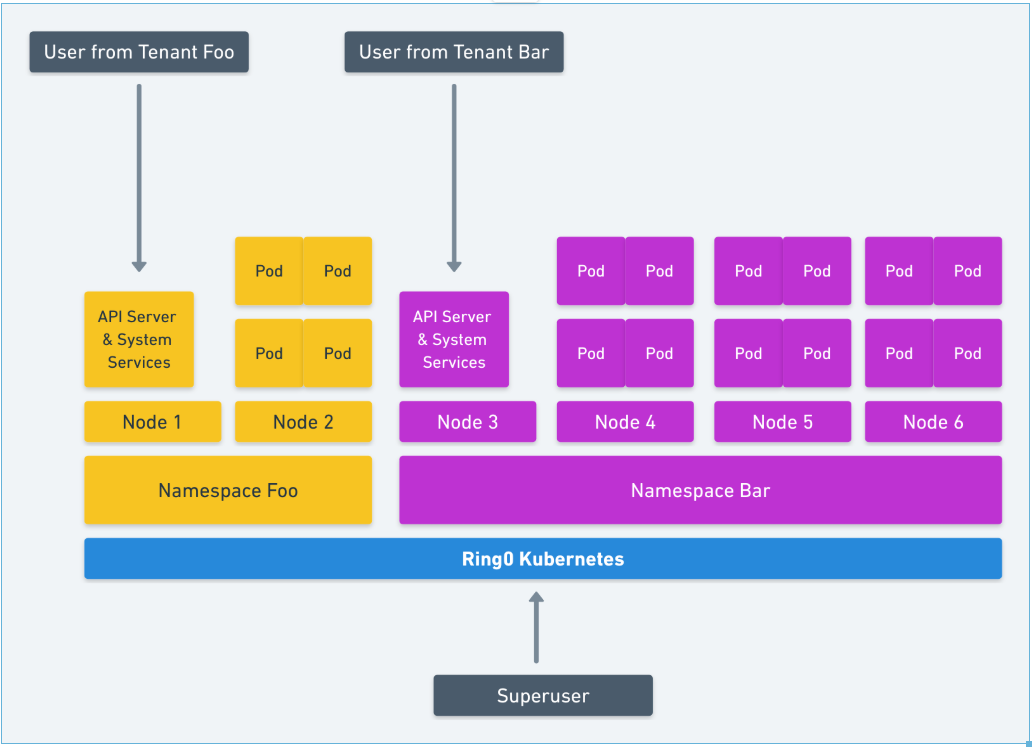

As a result, we are left with our current landscape and implementations. Side note - troubling as more specialized attention and publication is focused on CPU microcode vulnerabilities with popular data center vendors. What one would hope for when collapsing the security model to the workload akin to "A new event process abstraction provides lightweight, isolated contexts within a single process, allowing the same process to act on behalf of multiple users while preventing it from leaking any single user's data to any other user" isn't possible with the existing landscape. For instance, let's take a stab at Kubernete's multi-tenancy aspects. Focusing less on the container security controls, one would expect to see seccomp, apparmor, kernel namespaces, cgroups, capabilities, and an unprivileged OS server. There are a few of the controls to enable HARD multi-tenancy in kubernetes with defense in depth. The existing proposals are lacking. Failsafe defaults, complete mediation, educated deputy, least privileges, least common mechanisms are extremely hard to apply to Kubernete's API. By default, Kubernetes shares everything and many, many different broken drivers and plugins. The simplest method is moving every workload out of the DEFAULT namespace akin to:

to a stronger defense in depth isolation akin to:

While we could spend hours debating the various multi-tenancy aspects of Kubernetes and the above multi-tenancy criteria weights chart, there exists a working group to think and implement solutions to these challenges. Please join https://docs.google.com/document/d/1fj3yzmeU2eU8ZNBCUJG97dk_wC7228-e_MmdcmTNrZY/edit# if you wish to learn more and join us for debates. As the result of the trade offs we make in Kubernetes and Cloud Native Computing Foundation, it takes extremely specialized engineers and architects to work around and collapse the data security model around the workload such that one never had to trust the underlying container, cluster, and *aaS. The next post will detail how to compensate for those trade offs or the various technical controls one may apply.